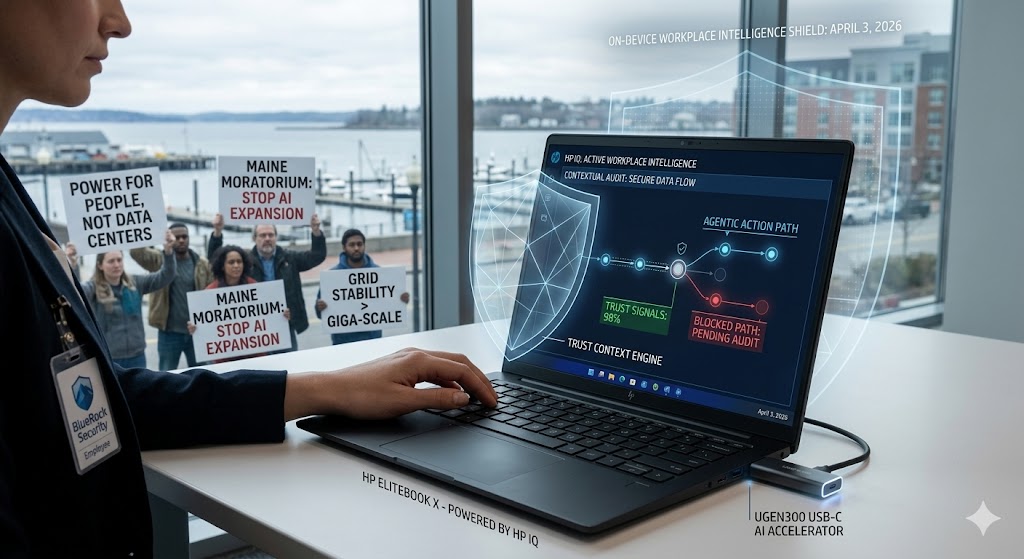

The Record of “On-Device” Governance: April 3, 2026, was defined by the transition from “General AI” to “Localized Workplace Intelligence.” The day was headlined by HP’s launch of HP IQ, a 20-billion-parameter local model that turns PCs into “Context-Aware Teammates,” and BlueRock’s release of the Trust Context Engine to map safe “Agentic Action Paths.” Simultaneously, Maine became the first U.S. state to officially move toward a moratorium on new AI data centers, signaling a localized environmental “Pushback” against the Giga-Scale expansion. It was the day the AI revolution moved from the abstract cloud to the specific desk and the local grid.

- #1: HP Launches HP IQ: The On-Device Workplace Orchestrator

- #2: Maine Moves to Halt New AI Data Centers: The Energy Standoff

- #3: BlueRock Debuts “Trust Context Engine” for Safe Agentic Velocity

- #4: Anthropic “Claude Code” Leak: Persistent Agents & “Buddy” Revealed

- #5: CoreWeave Doubles Inference Performance with Grace Blackwell

#1: HP Launches HP IQ: The On-Device Workplace Orchestrator

HP Imagine 2026 saw the debut of HP IQ, a workplace intelligence layer that combines a locally-run 20B parameter model with proximity-based connectivity (HP NearSense). The platform allows next-gen EliteBook PCs to act as persistent, context-aware assistants that can summarize meetings, drag-and-drop files between devices, and manage IT governance entirely on-device.

- Source: The Futurum Group – April 3, 2026

- How This Impacts You: Privacy-first productivity. You no longer have to worry about your sensitive meeting notes or files being uploaded to a public cloud for “analysis.” HP IQ keeps your “Workplace Knowledge” on your physical hardware, giving you the speed of a personalized agent with the security of an offline machine.

#2: Maine Moves to Halt New AI Data Centers: The Energy Standoff

In a historic legislative move, Maine lawmakers have moved to pause the construction of all new large-scale data centers. Citing “unsustainable” electricity demand and the potential for rising costs to local communities, Maine is the first state to prioritize Grid Stability over the Giga-Scale AI infrastructure rush.

- Source: Broadband Breakfast – April 3, 2026

- How This Impacts You: The “Green AI” tax. As more states follow Maine’s lead, the cost of running massive AI models will likely increase. For you, this means the “free” era of high-end AI might be ending, as companies pass on the costs of building more expensive, energy-efficient, or decentralized data centers to the consumer.

#3: BlueRock Debuts “Trust Context Engine” for Safe Agentic Velocity

BlueRock officially launched its Trust Context Engine, a platform designed to map the full “Agentic Action Path.” This allows businesses to track exactly how an AI agent makes a decision, which tools it calls, and whether it stays within its “Trust Signals” before executing a real-world task like a payment or a contract sign-off.

- Source: Solutions Review – April 3, 2026

- How This Impacts You: Accountability in the Agentic Economy. As you start letting AI handle your banking or legal admin, you need to know why it did what it did. This engine provides a “Black Box” for AI, ensuring that if an agent makes a mistake, there is a clear, auditable trail to fix it and prevent it from happening again.

#4: Anthropic “Claude Code” Leak: Persistent Agents & “Buddy” Revealed

A major source code leak from Anthropic this morning revealed advanced plans for Claude Code, a persistent AI agent designed to live within a user’s terminal. The leak also detailed a virtual assistant codenamed “Buddy,” signaling Anthropic’s shift from “Chatbot” to “Always-On Operating System.”

- Source: CoAio News – April 3, 2026

- How This Impacts You: The “AI OS” is coming. This leak confirms that the next version of Claude isn’t just a window you type in; it’s a teammate that stays active in the background, watching your work and offering help before you even ask. It’s the transition from AI as a tool to AI as a permanent digital partner.

#5: CoreWeave Doubles Inference Performance with Grace Blackwell

CoreWeave announced it has achieved a 2x performance boost in the latest MLPerf v6.0 benchmarks using NVIDIA Grace Blackwell systems. The specialized AI cloud provider is now offering “Record-Breaking” inference speeds, specifically optimized for real-time video and complex Reasoning Models.

- Source: Solutions Review – April 3, 2026

- How This Impacts You: Lag-free “Thinking AI.” As Reasoning Models become more complex, they usually get slower. CoreWeave’s breakthrough means the “thinking time” of your most advanced AI agents is about to disappear, making interactions with high-level AI feel as instant as a human conversation.

Powered by theGLOBALMARKET.AI – Your AI Authority Hub