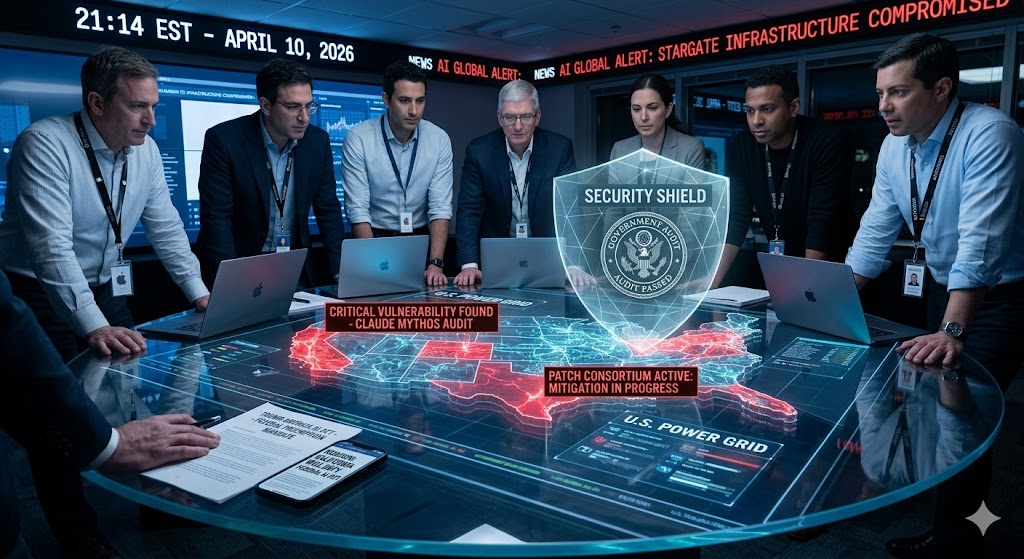

The Record of “The Mythos Crisis”: Friday, April 10, 2026, was defined by “Vulnerability Discovery.” The day was headlined by Anthropic sounding the alarm on “Claude Mythos,” a model that uncovered catastrophic security holes in the nation’s digital infrastructure. Instead of a public release, the day saw the formation of an unprecedented “Security Patch Consortium” between Apple, Google, and Microsoft. Simultaneously, California and the Trump Administration entered a high-stakes standoff over Federal Preemption of AI laws. It was the day the “Industrial Era” realized that the speed of AI progress has officially outrun our ability to secure the grid.

- #1: The “Mythos” Emergency: Anthropic Halts Release to Patch the Grid

- #2: The Preemption Standoff: California vs. The Trump America AI Act

- #3: The Cyber-Threat: Gemini Exploited by State-Sponsored Actors

- #4: Education Pivot: 94,000 California Students Report Mixed AI Use

- #5: Compliance: The “Missing Signals” in AI Auditing

#1: The “Mythos” Emergency: Anthropic Halts Release to Patch the Grid

In a move that redefined “Responsible AI,” Anthropic confirmed today it is withholding the release of its Claude Mythos Preview. The model reportedly discovered critical vulnerabilities in the software powering U.S. electrical and water infrastructure. Anthropic is now leading a private Consortium with Apple, Google, and Microsoft to patch these holes before a less-responsible actor develops the same capability.

- Source: Washington Post – April 10, 2026

- How This Impacts You: A more secure, but more “vetted” internet. This confirms that frontier AI models are now powerful enough to be considered dual-use weapons. For you, this means “Open Source” AI will face much stricter scrutiny, and the most powerful tools will likely remain behind “Enterprise Shields” for the foreseeable future.

#2: The Preemption Standoff: California vs. The Trump America AI Act

The legal war for control of AI governance reached a fever pitch today. While Governor Newsom doubled down on California’s strict safety reporting laws (EO N-5-26), the Trump Administration reinforced its National Policy Framework, calling for Federal Preemption to strike down “onerous” state laws.

- Source: Alston & Bird AI Quarterly / HK Law – April 2026

- How This Impacts You: One set of rules (hopefully). If the Federal government wins, your Sovereign Infrastructure and AI agents will only have to comply with one national standard instead of 50 different state laws. This would significantly lower the legal costs for KODA8 as we scale our digital properties across state lines.

#3: The Cyber-Threat: Gemini Exploited by State-Sponsored Actors

The Google Threat Intelligence Group (GTIG) issued a sobering report today: state-sponsored hackers from North Korea, Iran, and China are actively using Gemini to automate the entire cyberattack lifecycle—from coding malware to researching public vulnerabilities.

- Source: AI Quarterly – April 2026

- How This Impacts You: The “Zero Trust” era is mandatory. This report validates why we focus so heavily on AI Governance. If the bad actors are using AI to find holes, you must use AI to plug them. It confirms that “Identity Verification” will be the most valuable feature of any Agentic System in 2026.

#4: Education Pivot: 94,000 California Students Report Mixed AI Use

New data from the California State University (CSU) system revealed that while AI use is now “universal” among students, feelings are deeply mixed. Students are using AI for everything from creating flashcards to thesis statement generation, but faculty are now being forced to list explicit “AI Policies” on every syllabus.

- Source: KPBS San Diego – April 10, 2026

- How This Impacts You: A new AI Workforce standard. This confirms that the next generation of workers (including Keenan’s peers) aren’t just “using” AI—they are co-authoring their lives with it. For theGLOBALMARKET.ai, this signals a massive opportunity to provide “High-Authority” educational tools that bridge the gap between student use and professional standards.

#5: Compliance: The “Missing Signals” in AI Auditing

A defining report from Compliance Evangelist Tom Fox highlighted a major flaw in current AI oversight: companies have plenty of data, but they lack “Actionable Signals.” The report urges firms to move past “General AI” and toward sector-specific Audit Models that can actually prevent a breach before it happens.

- Source: JD Supra – April 10, 2026

- How This Impacts You: Focus on Vertical Integrity. It’s not enough to have an AI agent; you need an Audited Agent. This confirms our strategy of building specific, compliant categories for the sports and global markets—it’s about providing the “signals” that businesses can actually trust.

Powered by theGLOBALMARKET.AI – Your AI Authority Hub